Discover my

work!

customers

My story

I start learning developing, I was always be curious, technologies enthusiast.

Searching how break, change, understand, bypass any kind of logic, sciences and techniques.

I study industry and electronic.

I work years into E-commerce as system administrator, in parallel doing my own projects open source (some of project is used by state, millions of daily use, etc...).

Always breaking record, best code, best in competing, best in performance.

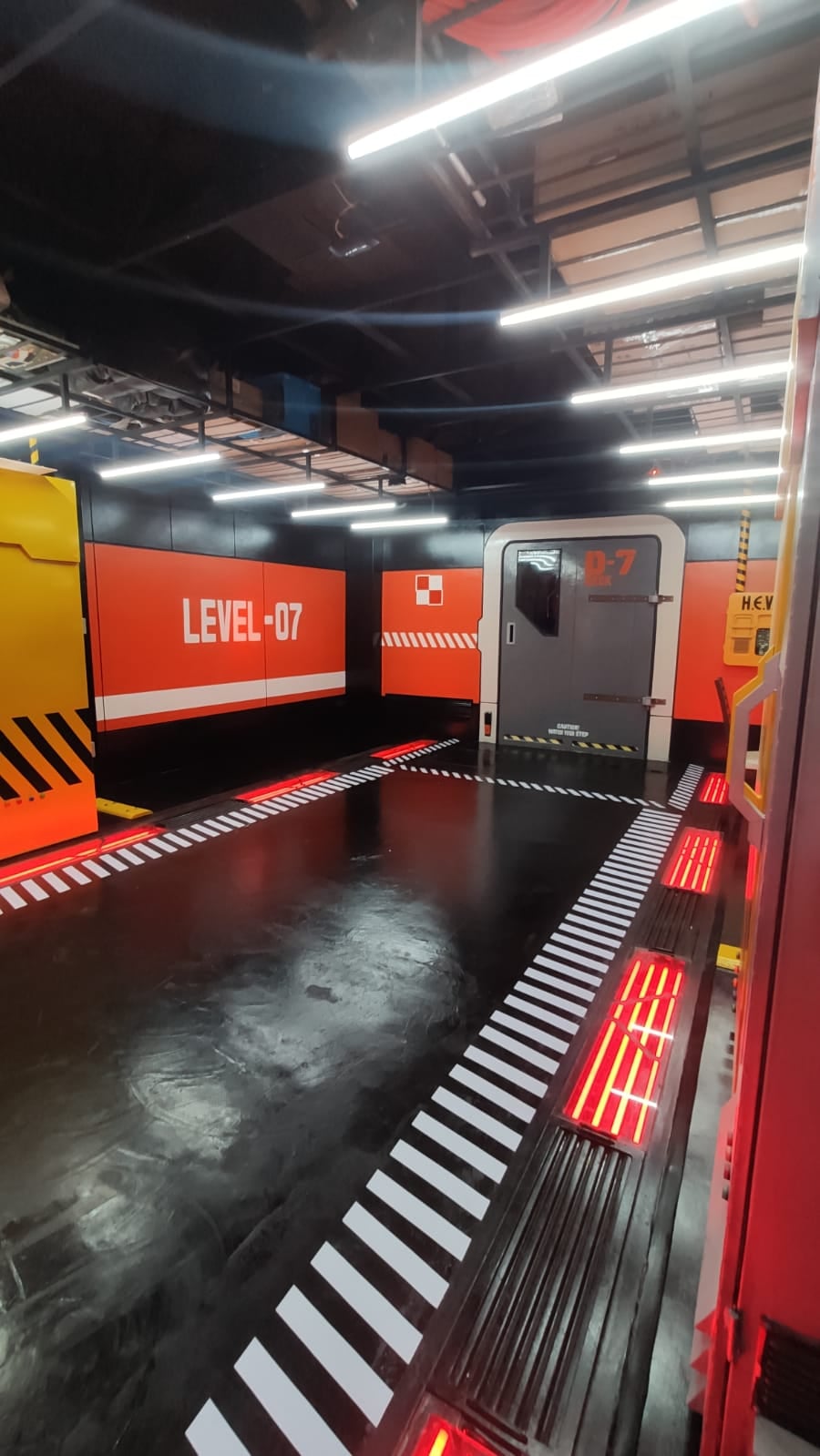

After years I start my own business, my own data-center, my own ISP, doing from electronic part, electricity part, building, office design, etc...

Teaching technologies and technique into university, doing research and development, thinking out of the box to create solution some specific solution.

My Services

Research and development

Development best technology, better manufacture process

Product Design

Design product, manufacture process, price, target market

Company work

Work into company as CTO, team leader or project leader

Communication

Communicate into your company to create work force, communicate to the public what you do

Latest Publications

bye mdadm raid5! welcome btrfs raid1

Hi, I used btrfs over mdadm raid1, btrfs detect checksum corruption, then I investigate. And I see some SSD have…

- October 14, 2023

- Herman BRULE

AS272840 the best in Bolivia

Hi, now AS272840 (know as dan solution in Bolivia) use my technologies. As you can see, the bandwidth and latency…

- August 27, 2023

- Herman BRULE